Fit a linear model by ridge regression over many values of the regularization parameter

Details

Adapt ridge fitting algorithing using SVD from MASS::lm.ridge(), adding unpenalized design matrix and weights

Examples

y = longley$GNP.deflator

design = model.matrix(~ 1, longley)

X = longley[,-1]

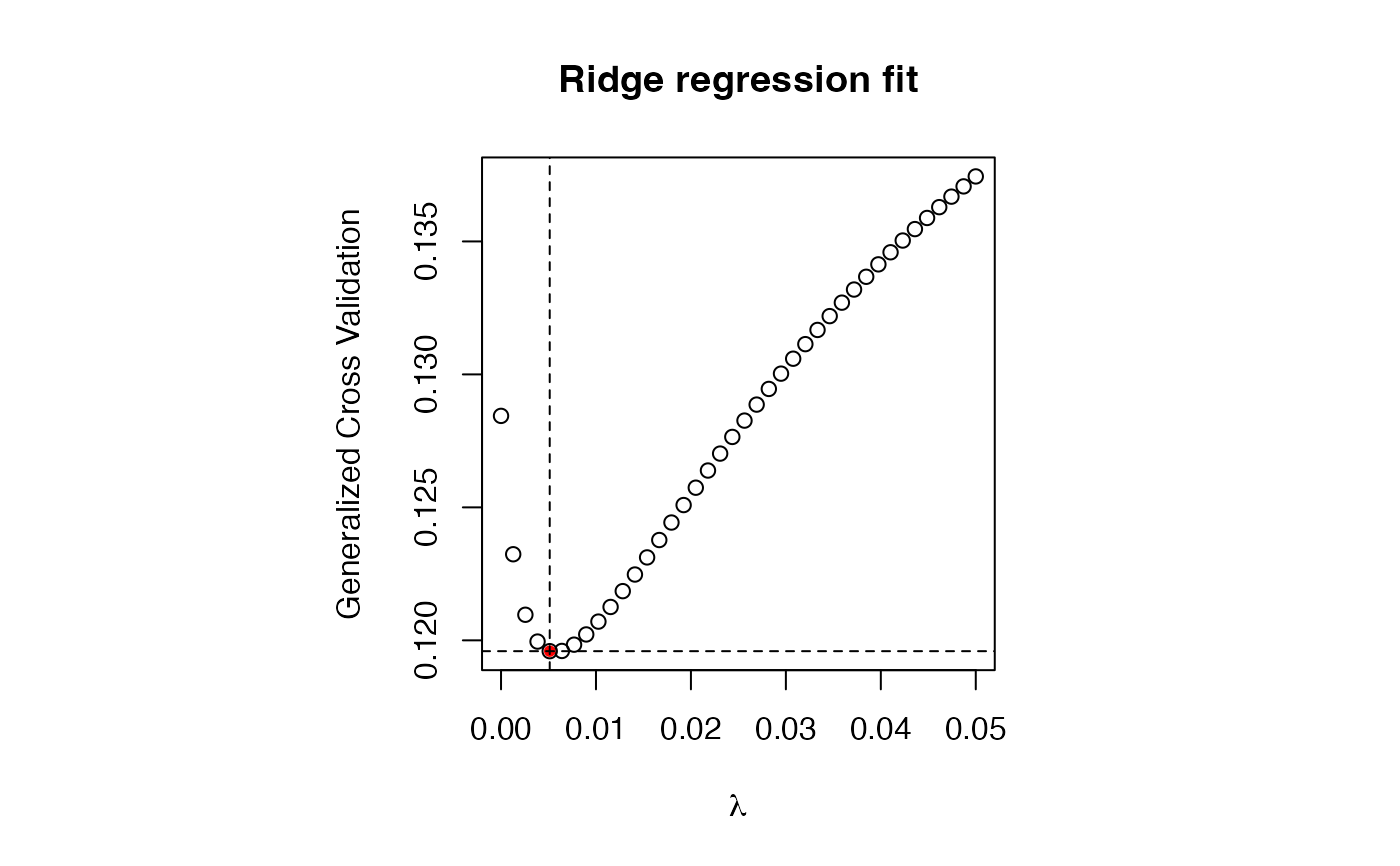

lambda = seq(0, .05, length.out=40)

fit = lmRidge(y, design, X, lambda = lambda)

par(pty="s")

plot(fit)

# get coefs with best GCV

i = which.min(fit$GCV)

fit$coef[,i,drop=FALSE]

#> 0.005128205

#> GNP 17.6910338

#> Unemployed 1.8533830

#> Armed.Forces 0.4525287

#> Population -9.2444737

#> Year 0.6904862

#> Employed -0.1858366

fit$coef_design[,i,drop=FALSE]

#> 0.005128205

#> (Intercept) 101.6812

# predict

predict(fit,

newdata = list(design = design, X = X),

lambda = fit$lambda[i])

#> 0.005128205

#> 1947 83.70544

#> 1948 86.87336

#> 1949 88.14397

#> 1950 90.87599

#> 1951 95.90758

#> 1952 97.69792

#> 1953 98.49161

#> 1954 100.11293

#> 1955 103.26701

#> 1956 105.16366

#> 1957 107.47808

#> 1958 109.44567

#> 1959 112.71412

#> 1960 113.94012

#> 1961 115.38703

#> 1962 117.69551

# get coefs with best GCV

i = which.min(fit$GCV)

fit$coef[,i,drop=FALSE]

#> 0.005128205

#> GNP 17.6910338

#> Unemployed 1.8533830

#> Armed.Forces 0.4525287

#> Population -9.2444737

#> Year 0.6904862

#> Employed -0.1858366

fit$coef_design[,i,drop=FALSE]

#> 0.005128205

#> (Intercept) 101.6812

# predict

predict(fit,

newdata = list(design = design, X = X),

lambda = fit$lambda[i])

#> 0.005128205

#> 1947 83.70544

#> 1948 86.87336

#> 1949 88.14397

#> 1950 90.87599

#> 1951 95.90758

#> 1952 97.69792

#> 1953 98.49161

#> 1954 100.11293

#> 1955 103.26701

#> 1956 105.16366

#> 1957 107.47808

#> 1958 109.44567

#> 1959 112.71412

#> 1960 113.94012

#> 1961 115.38703

#> 1962 117.69551